If you dump your network logs into an LLM, then it can explain BGP failover from first principles, walk through OSPF route redistribution, and describe every known failure mode for every major vendor’s hardware. But it will also, at some point, give you a confident answer about your network that turns out to be wrong, because it has never seen your network.

This is because standard LLMs operate under a critical limitation: They contain zero information about your specific environment. They do not know your historical BGP peering patterns, your unique configuration drift accumulated over three migrations, or your specific application-to-subnet mapping.

So while the output it generates is coherent, it is also, frequently, wrong.

A coherent wrong answer is far more dangerous than no answer at all, because it gets acted on

When an LLM encounters a query about your network, it fills those knowledge gaps with its general probabilistic training data. The resulting response is authoritative and persuasive, but in a NetOps context, a coherent wrong answer is far more dangerous than no answer at all, because it gets acted on.

The Structural Problem?

The instinct is often to solve AI accuracy by feeding the model more raw data: larger context windows, more flow logs, and more raw telemetry streams. This approach is usually counterproductive as LLMs suffer performance degradation when critical information is buried in the middle of long, unstructured data. Adding more raw telemetry data without adding structural context only compounds the problem.

The Core Solution: A “Context Engine” for Network Intelligence

LLMs suffers performance degradation when critical information is buried in unstructured telemetry.

What network AI actually requires is not more data, but a semantic infrastructure layer. It needs a structured, connected model of your network that an LLM can reason over, rather than a data dump it must make sense of.

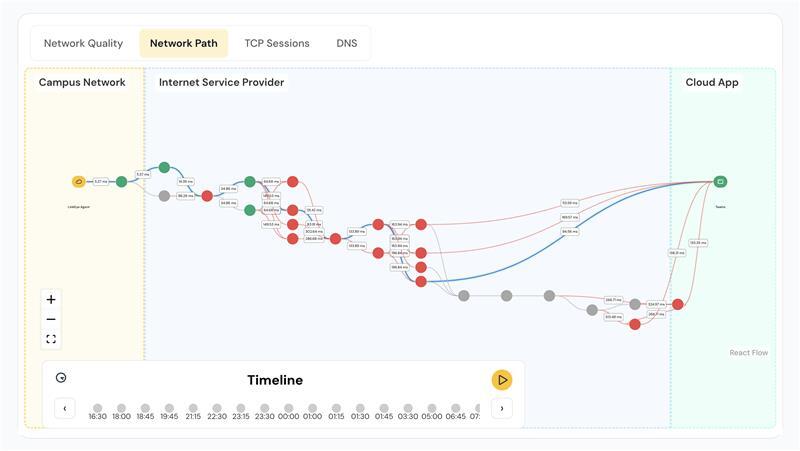

LinkEye delivers this through its Context Engine architected to allow LLMs like Claude or ChatGPT to securely “plug in” to your network’s specific live data, documentation, and history. It translates the raw telemetry of your physical network infrastructure into a format that is LLM-friendly, and organizes this transition across four specific functions:

- Ingest Raw Telemetry: The engine pulls from diverse sources simultaneously: configuration files, ticketing systems, flow data, APIs, and syslog. It handles the “data fragmentation” symptom that prevents unified visibility.

- Structure AI-Ready Context: Raw telemetry is mapped into structured language that defines relationships and dependencies. It moves data from “a device has an IP” to “this BGP peer is connected to a circuit governed by specific SLA terms.”

- Correlate Cross-Layer Context: Structured data is unified into a semantic knowledge graph. This is where hidden patterns and root causes across disparate devices get detected, grounding the AI in the unique ‘normal’ of your specific baseline.

- Precision Retrieval: Instead of overwhelming an LLM with 10,000 lines of raw logs, this layer identifies and retrieves only the specific subgraph of entities and historical relationships that are relevant to the question being asked. By restricting the AI’s focus to these verifiable facts, we eliminate the primary cause of model hallucinations and ensure every answer is grounded in your actual network state.

Moving from Theory to Reliable Action

Precision Retrieval is the new benchmark for reliability in NetOps.

The question is no longer whether your enterprise AI is capable. It is whether your AI-output is grounded in context and reality. In an operational environment where the cost of an ungrounded “hallucination” can reach $1 million per hour (Gartner), the difference between a smart model and a context-aware engine is the only metric that matters.

LinkEye’s Context Engine closes that gap, transforming complex, dark data into a secure and answerable network environment.